Due to the tiny bug in the interface, please click IMAGE or TITLE to view the project details. I will fix this bug as soon as possible. Sorry for the inconvenience.

ChatLLM: From-Scratch LLM Training and Post-Training Stack

Built an end-to-end LLM stack from scratch, covering scaling law experiments, compute-optimal model sizing, pre-training, supervised fine-tuning, and GRPO-based post-training. Trained a ChatGPT-style conversational model and deployed an interactive demo to showcase instruction following, reasoning, and multi-turn generation.

LLM

Pre-Training

Scaling Laws

SFT

GRPO

Mixed Precision Training

ZeRO-2

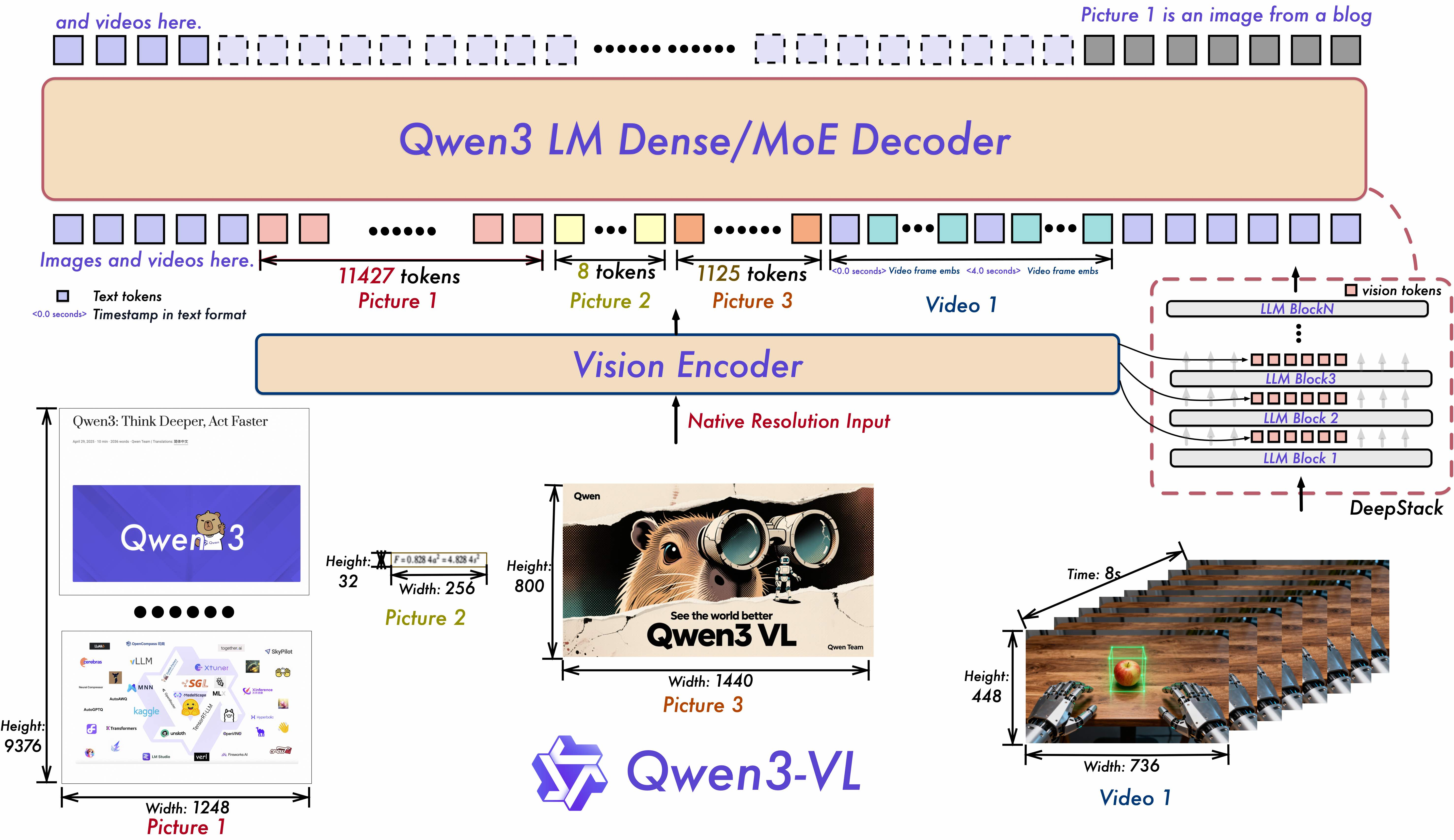

Qwen3-VL Inference

Built a Qwen3-VL model from scratch in PyTorch, loaded the 14B (224x224) model, fine-tuned it with LoRA for specific tasks, and developed a Gradio app to showcase its capabilities.

Qwen3-VL

PyTorch

LoRA

DeepStack

M-RoPE

Qwen3-VL Inference

Built a Qwen3-VL model from scratch in PyTorch, loaded the 14B (224x224) model, fine-tuned it with LoRA for specific tasks, and developed a Gradio app to showcase its capabilities.

Qwen3-VL

PyTorch

LoRA

DeepStack

M-RoPE

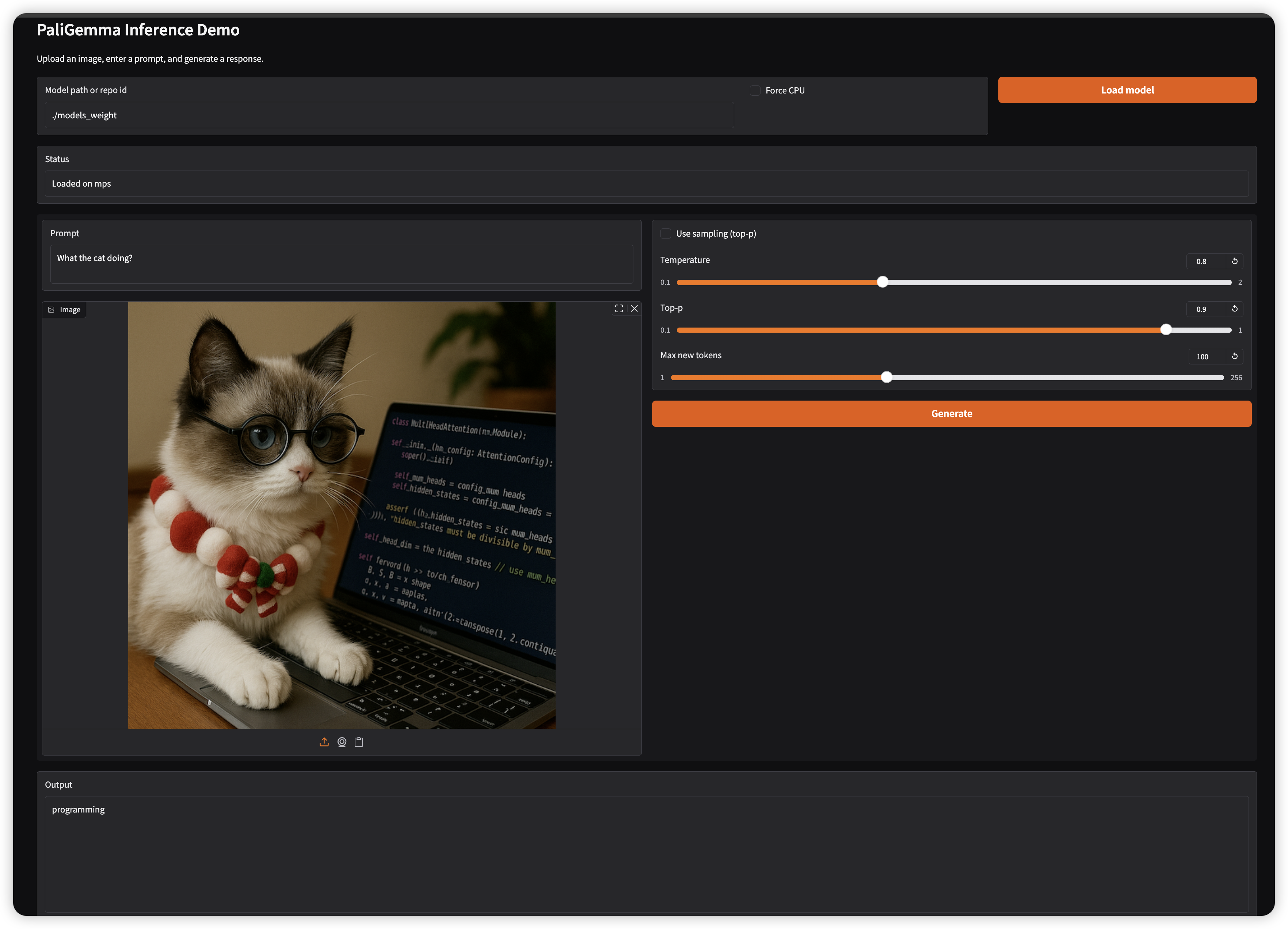

PaliGemma Inference and LoRA Fine Tuning

This project involves modeling a multi-modal large language model called PaliGemma, which integrates vision and language tasks. First we perform inference using the pre-trained PaliGemma model. Then, we fine-tune the model using LoRA (Low-Rank Adaptation) for specific applications, for example Receipt OCR Extraction. By the end, will implement a Gradio web interface to demonstrate the fine-tuned model's capabilities.

LoRA

PyTorch

Gradio

Grouped Query Attention

RoPE

KV Cache

No matching items