CS336: LLM from Scratch Lecture Notes and Assignments

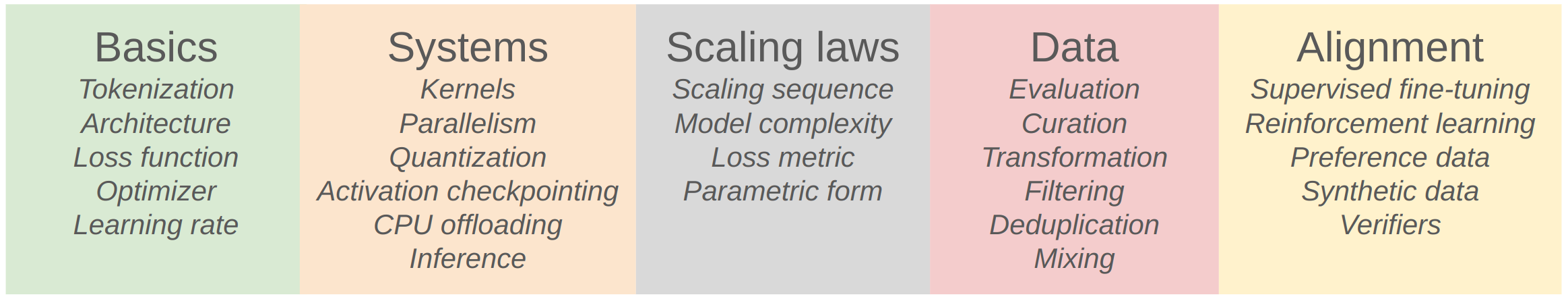

CS336是关于从零开始构建大型语言模型的综合课程,涵盖了从Transformer架构的基础知识,到MoE模型,GPU加速,Parallelism训练,模型的评估,数据的收集和处理,以及LLM的对齐算法等最新的进展。这个页面包含了CS336课程的所有学习笔记和作业解答。

Related Resources:

- Lecture Website: CS336 LLM from Scratch

- Lecture Recordings: YouTube Playlist

- My Solution Repo: GitHub

About this Course:

- This course has

17 Lectures and5 Assignments in total. - It might take around

200 hours to finish all the lectures and assignments.

For those who have

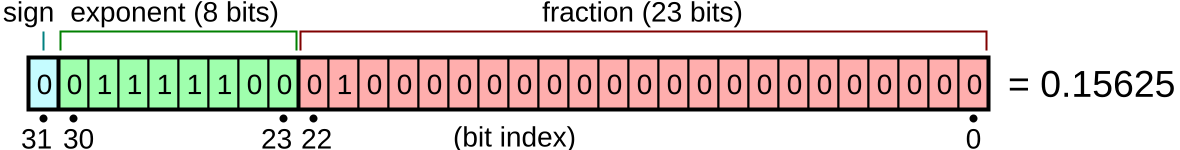

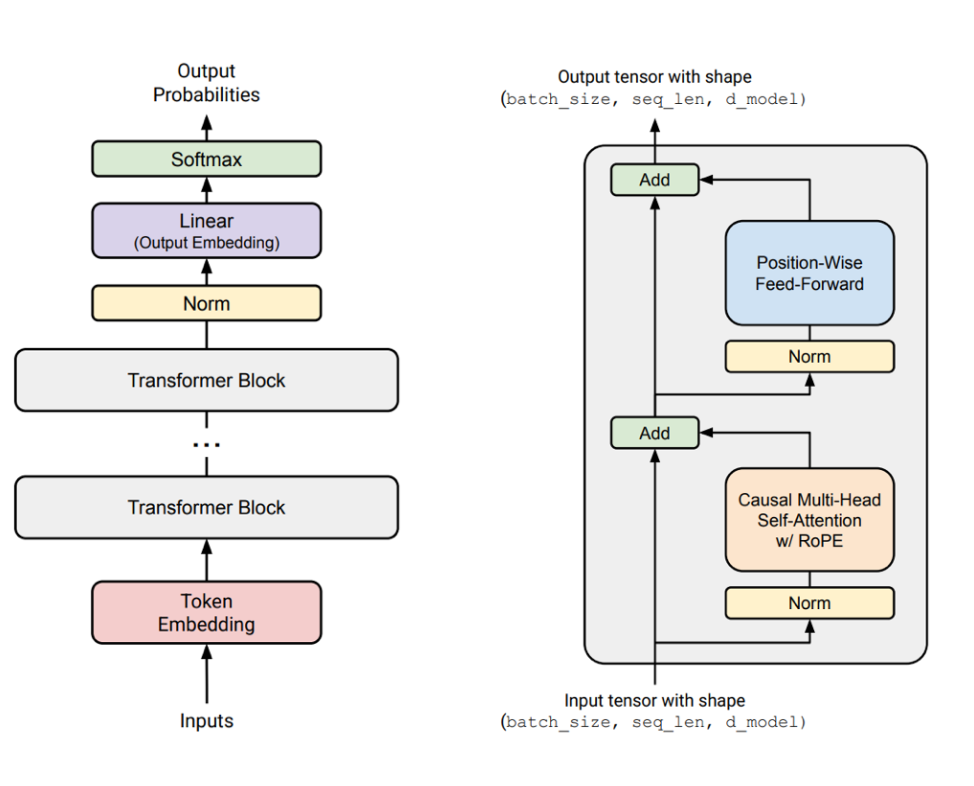

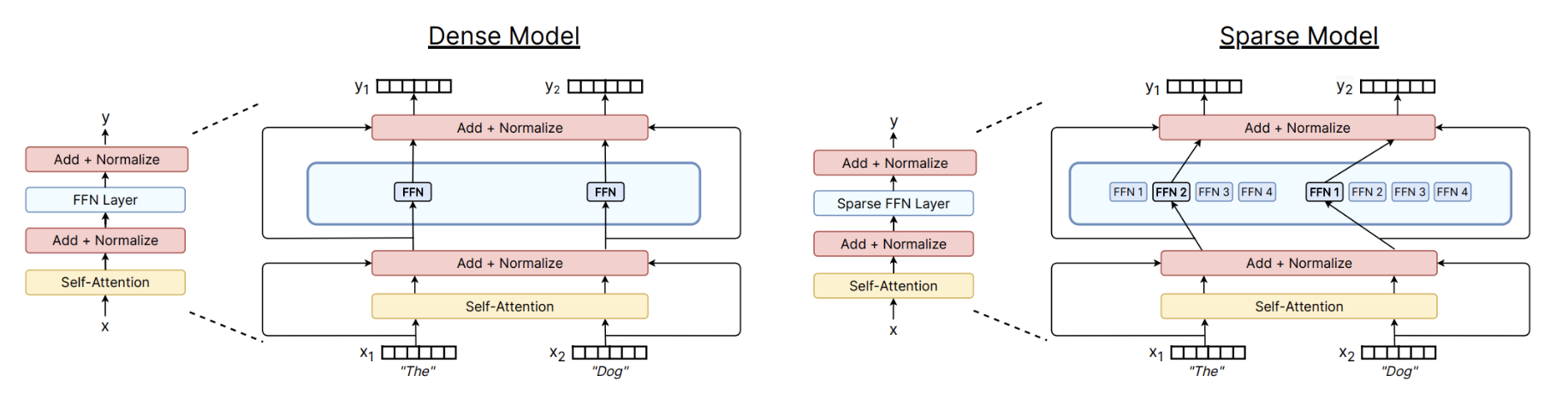

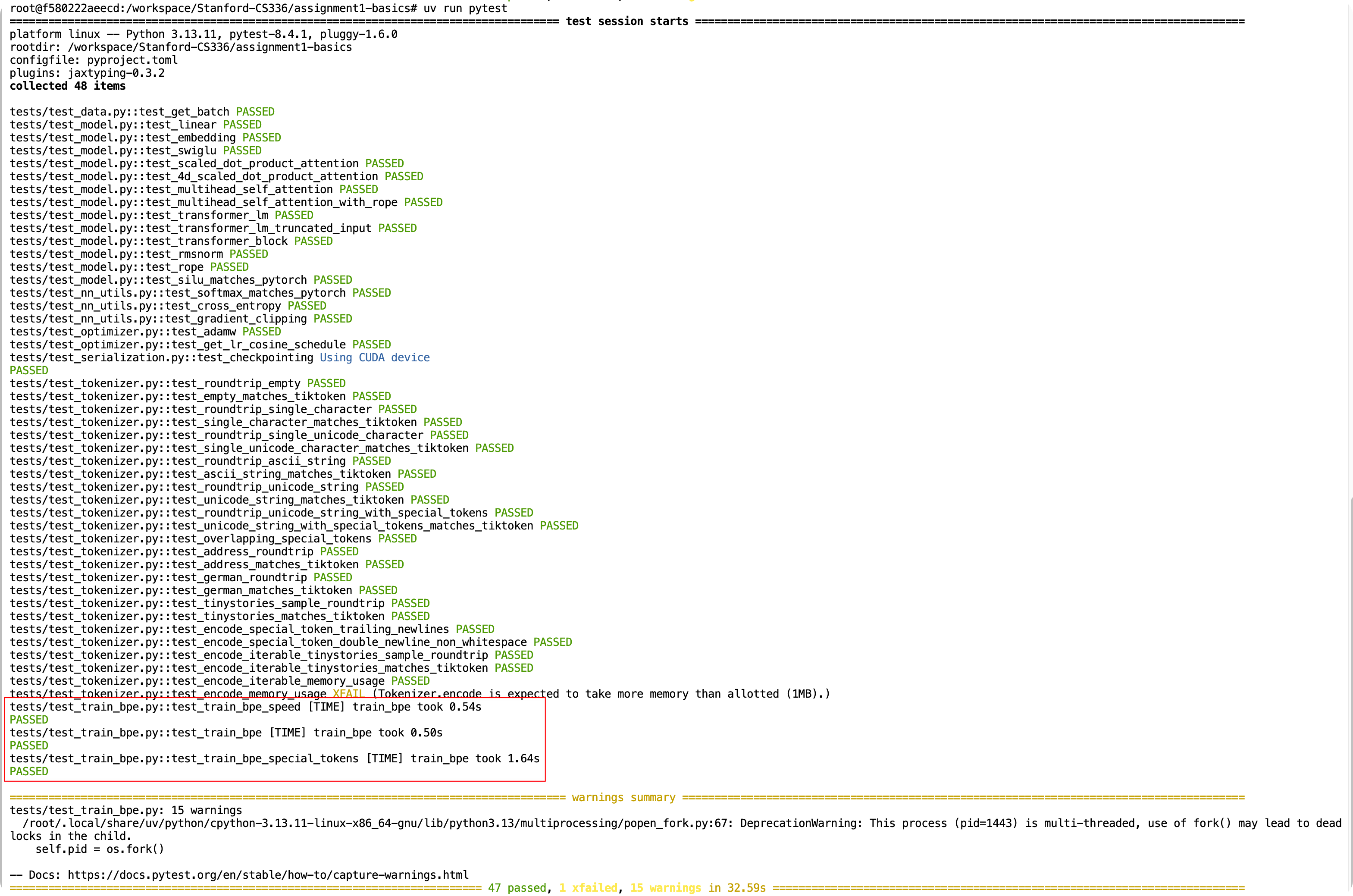

- LECTURE 1, 2, 3, 4 & Assignment01: After completing these, you will have a solid understanding of the fundamentals of LLMs, such as the Transformer Language Model architecture, attention mechanism, Mixture of Experts, and the training process of LLMs using autoregressive language modeling.

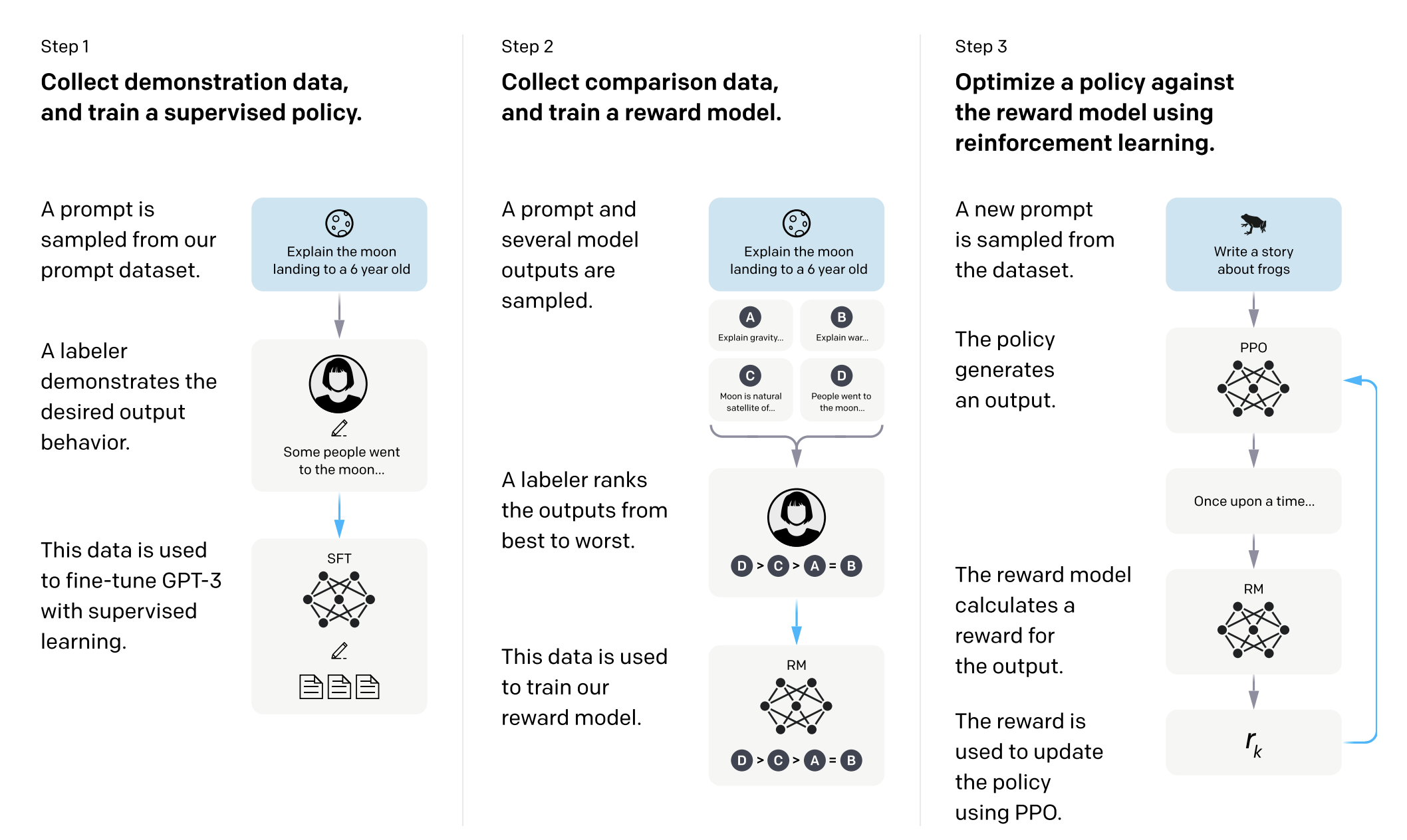

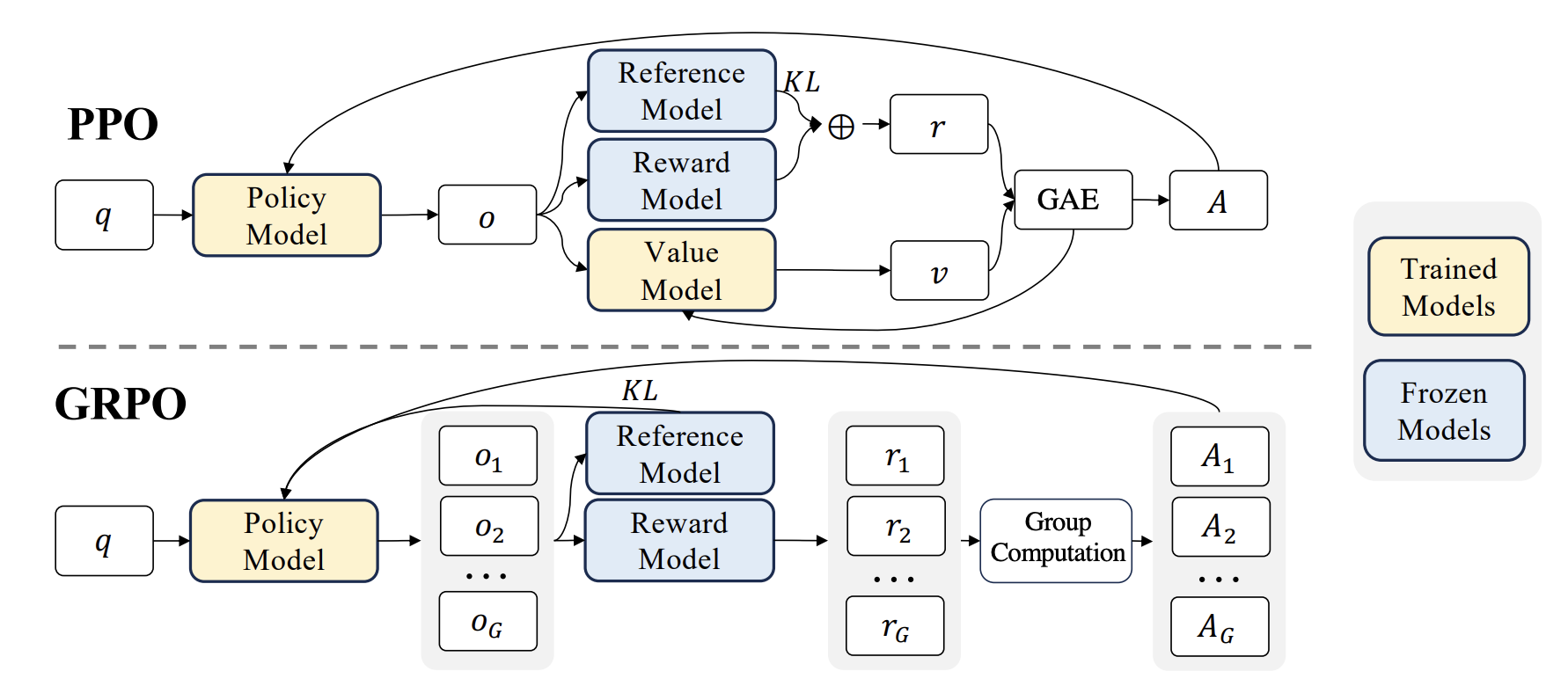

- LECTURE 15, 16, 17 & Assignment05: These cover advanced topics such as LLM aligment algorithms, such as SFT, RLHF(PPO, DPO), and RLVR(GRPO, Dr.GRPO). After completing these, you will understand how to align LLMs with human preferences and train a reasoning LLM.

- LECTURE 5, 6, 7, 8 & Assignment02: These focus on the hardware and parallelism techniques for training large models. After completing these, you will understand how to efficiently train large LLMs using distributed systems, such as data parallelism, model parallelism, and pipeline parallelism, and speed up the training process by leveraging the power of GPU, and undertand FlashAttention and its implementation.

- The remaining lectures and assignments are also important, but they can be studied at a later time based on your interests and needs. Those includes:

- Lecture 9 & 11: Scaling Laws

- Lecture 10: Inference Optimization

- Lecture 12 & Assignment 03: Evaluation of LLMs

- Lecture 13, 14 & Assignment 04: Data Collection and Processing

Lectures Notes

Assignments Solutions

No matching items